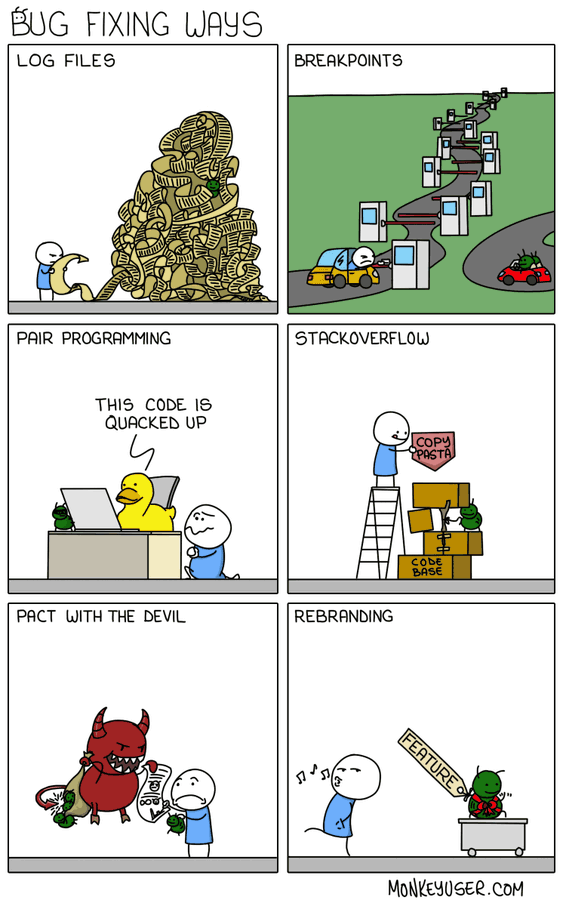

This refactor significantly boosts the codebase's strict health score from 18.6 to 70.3 by addressing technical debt accumulated across the backend. Key improvements include replacing bare exception handlers with specific types, standardizing timezone-aware datetime usage, removing deprecated dead code, and organizing imports. These changes lead to a more maintainable, predictable architecture and ensure standard API path conventions moving forward.

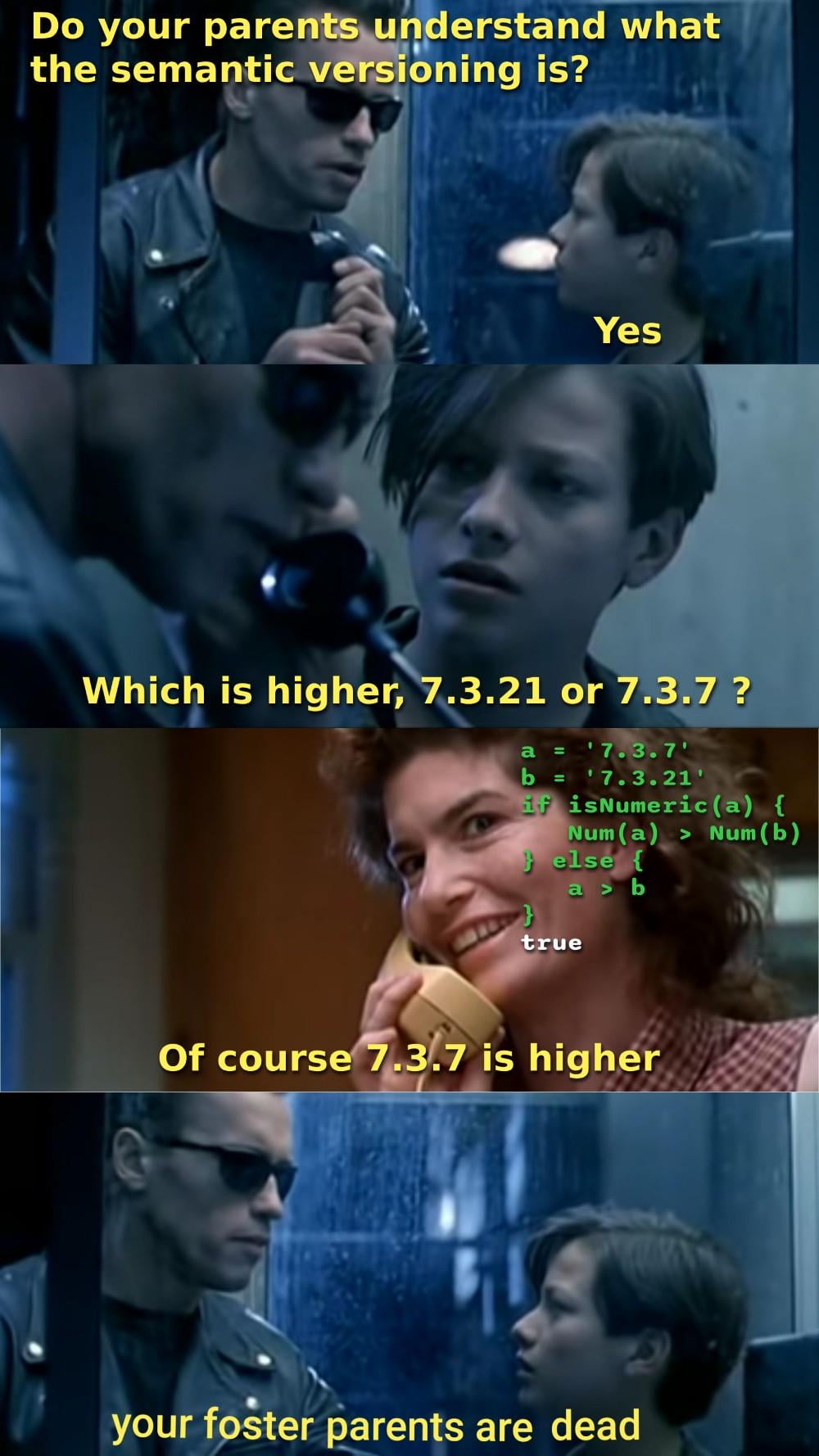

This update streamlines how test case inputs and outputs are structured by moving from dynamic objects to string-based JSON representations. This change improves compatibility with Gemini's structured-output mode and introduces robust validation logic to ensure data integrity during test case editing and AI-driven regeneration. These improvements provide a more reliable and consistent experience when managing unit tests.

Updated test case definitions in lib/code-ai.ts to use string-based JSON representations instead of dynamic-key objects. This change significantly improves compatibility with Gemini's structured-output mode and introduces robust validation logic to ensure data integrity. These modifications should reduce parser errors in AI-driven test execution.

We fixed SELinux access denials for sibling containers by adding the :z relabel option to host bind mounts for /tmp/jobs, ensuring execution containers have necessary write permissions. Additionally, we updated the G++ configuration to support C++20, allowing for modern C++ syntax in submitted code. These changes improve reliability for execution environments on restricted environments like AWS EC2.

This update introduces automatic starter code generation for multi-language support in the problem-solving interface, alongside a major UI overhaul switching to a cleaner light theme. We've also improved Docker deployment by including necessary Prisma binary files and added a feature-gated sidebar navigation based on course availability.

This update introduces comprehensive management features for exams and unit tests, including new API endpoints and UI improvements for course administration. Additionally, we have integrated grade level support across student onboarding and course browsing for better academic alignment, along with bulk user promotion tools for administrators.

Ran the automated enrichment pipeline to refresh GitHub star counts and record the latest commit timestamps for supported projects in public/enrichment.json. This ensures metadata stays accurate for community tracking.

To better align with Resend's free tier limitations, I've updated the email notification script to handle user distribution in batches based on specific days. The script now includes new command-line arguments to control the daily schedule and ensures we skip users who have already been notified. This change allows for reliable, throttled email delivery while improving logging transparency.

We've officially hit version 2.0.0! This release brings a comprehensive UI redesign for better visual consistency across the platform, alongside a fully integrated transactional email system. Users will now receive automated welcome emails, alerts for friend requests and post milestones, and periodic re-engagement updates to keep them connected with our community.

We have successfully rolled out a robust email notification system to boost user engagement. This implementation covers critical user touchpoints, including welcome emails for new signups, alerts for friend requests and activity, as well as milestone notifications for post interactions. The system uses a centralized layout for consistent branding and includes a background cron job for re-engagement efforts.

This update makes the initial onboarding and Sidekick setup flows skippable, ensuring users can navigate the platform before fully committing to configuration. We also introduced a centralized getSidekickSetupStatusByUserId check to gate active features, preventing incomplete setups from causing silent integration failures, and migrated Inngest to v4. Additionally, fixed a JavaScript type coercion bug in the onboarding resume logic that was preventing users from returning to their last saved step.

Streamlined the WaitlistModal component by consolidating the close logic into a unified handler. This update ensures that component state (email input, loading status, errors) properly resets on every close action, while also optimizing input focus management for a smoother user experience.

Improved the NavBar component by introducing dynamic mobile detection using a browser resize listener. This allows the layout to seamlessly switch between the full-width desktop view and a simplified mobile interface, ensuring a better experience across different devices.

Added comprehensive SEO metadata, OpenGraph tags, Twitter cards, and structured JSON-LD data to the main layout to improve site discoverability and social sharing. Cleaned up project structure by relocating favicon assets to the public directory and removing the unused favicon in the app folder.

Introduced a new Toaster notification component to provide better UI feedback throughout the application. Additionally, refactored the WaitlistModal to leverage this new system for displaying success messages, replacing previous custom implementation methods. This ensures a consistent notification experience for end-users across the platform.

This update implements the WaitlistProvider to streamline lead collection, backed by MongoDB integration for persistence. Additionally, the Hero, CTA, and Navigation sections received a design refresh, and outdated assets were cleaned up to improve build efficiency.

Updated the dashboard data to reflect the current state of the portfolio, including recent position changes and returns. This sync ensures that the analytics and equity breakdown displayed on the dashboard are current.

We've significantly upgraded the MonkPayments landing page by integrating Lenis for smooth scroll behavior and adopting MonkPayments design tokens for a more cohesive aesthetic. The main page layout was refactored to prioritize high-impact sections like ChatDemo and Comparison, while streamlining the NavBar and HeroSection interaction design.

The dashboard_data.json file has been updated with the latest portfolio metrics, showing 334 active positions with a total return of approximately -1.6%. The update reflects the current state of tracked assets and performance indicators.

Refreshing the dataset for the dashboard to reflect the current state of our 334 open positions and system performance metrics. The snapshot highlights a challenging market environment with a total return of -1.6% and significant exposure.